What is a Markov Chain?

The concept of “markov chain” is foundational to diffusion models. It’s one of the black magic fuckery things that make the impossible come to life with AI.

In Basic Terms

A markov chain is a mathematical model describing a sequence of events, in which each event’s state is calculated purely based on the previous state. That is to say, what happens next only depends on what is happening right now (not any other previous states). This is a stochastic model — it has some elements of randomness that lead to the next state, not a deterministic/completely reproducible series of events.

In Machine Learning + AI

Markov Chains are highly relevant to diffusion models in machine learning. More specifically, Markov Chains are used to model how information flows/diffuses through a network. There’s a certain probability of information passing from one node to the next, and this can be represented with a markov chain.

Similarly, diffusion models can be used to predict the spread of diseases, rumors, and innovations in a population, among other things, following this same model of markov chains. People use them to model other things as well, like airport lines, animal population reproduction, etc.

Furthermore, about it’s application to machine learning, though:

Markov Chains in Diffusion Models

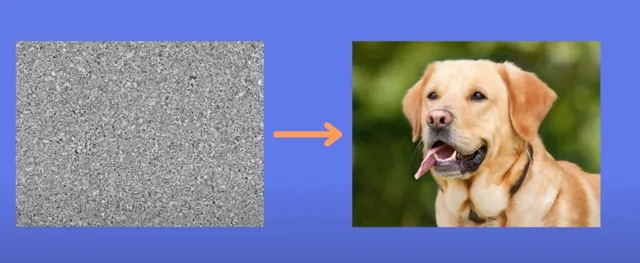

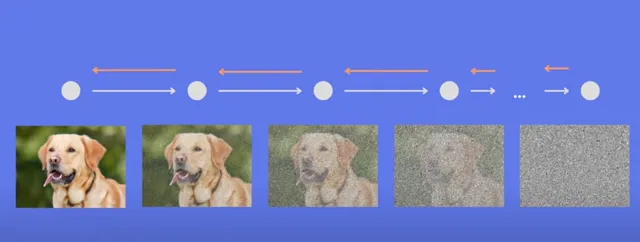

When training a diffusion model, basically what we do is something like adding noise to an image in steps, using a markov chain:

The noise added at each step to the image of the dog is random, thus the markov stochastic bit. We do this hundreds or thousands of times, adding bits of gaussian noise every step. Eventually we end up at pure noise.

Then, after doing that a bunch of times, and having a neural net learn from the output each step of the way, what we do is ask the neural net to go the other way — from noise, go back to the image of the dog. This is called reverse diffusion.

More specifically, we use a Convolutional Neural Net (CNN) to do the reverse diffusion, step by step. Actually it’s a “U Net”, a type of CNN. Not sure what that means

This is basically how diffusion model works. It learns from the process of adding noise randomly, incrementally, and then applies that to move backwards.

Last modified:

Last modified:  Last modified:

Last modified: